YouTube: One Step Forward, Two Steps Back

YouTube’s actions continue to fall short of its rhetoric when it comes to fighting extremism. Despite very public announcements of new technologies, more content reviewers, and a stated dedication to preventing terrorists and extremist groups from using the site, YouTube is still lacking in three categories: the removal of Anwar al-Awlaki content; preventing the initial upload of new official ISIS content; and removing videos made by ISIS supporters. While YouTube has made progress in deleting of thousands of hours of gruesome content and videos that urge the use of violence, there is still a troubling amount of room for improvement.

After years of pressure, YouTube officially began to remove all Awlaki videos last November. A leader of al-Qaeda in the Arabian Peninsula (AQAP), Awlaki has been tied to 55 extremists in the U.S., either through direct communication while he was alive or through the proliferation of his sermons and lectures on platforms like YouTube. YouTube’s pledge to remove all examples of Awlaki content was praised on both sides of the aisle, with Senators Cotton and Feinstein both voicing their support. And yet, since then, Awlaki content remains on YouTube. Simple searches in late January revealed 14 Awlaki videos still on the site, including some that had been uploaded in December, long after YouTube’s ban. It is very likely that even more Awlaki videos have made their way back to the site, concealed by a modicum of sophistication. This calls into question YouTube’s long-term commitment to preventing banned content from being reintroduced onto the platform.

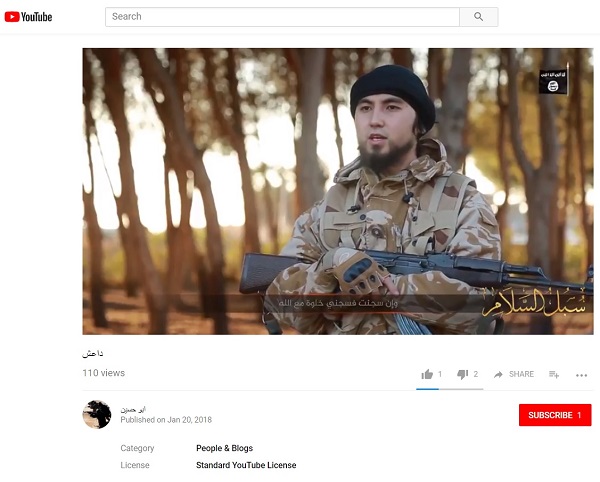

New ISIS videos can remain on YouTube for days. The company claims to remove extremist videos through hashing technology, which functions like a video fingerprint. Once a hash is created from a video, it can be used to prevent reuploads of that same footage. When applied correctly, hashing can indeed prevent unwanted extremist and terrorist propaganda from making it onto the platform in the first place. Unfortunately, it appears that YouTube lacks clear procedures and sufficient capacity for rapid identification of new content to create new hashes, which can be deployed to remove new ISIS propaganda films. In three recent cases, official ISIS videos calling for terrorist attacks were on the site for 2 days, 6 hours, and 3 hours, respectively, garnering thousands of views. In early January, the European Commission set a goal of 2 hours for the removal of terrorist content, which includes new videos as well as reuploaded content. While YouTube has improved the rate at which it removes reuploaded content, 2 days, and even 3 hours, is too long for terrorist content to remain active and sharable on the site.

Additionally, thinly-veiled pro-ISIS media groups are still active on YouTube. An English language channel identified as the “War and Media Agency,” which purports to be a documentary news program, has been on the platform for more than a month. Several of its videos have thousands of views, and the channel has more than 150 subscribers. The “War and Media Agency” is, in fact, an online pro-ISIS front that uses ISIS produced photos and a robotic voice generator to broadcast statements from ISIS’s Amaq News Agency. It is disconcerting that such a low level of subterfuge could fool both YouTube’s content reviewers and the site’s machine learning algorithms and vaunted artificial intelligence.

It has been almost three and a half years since the first ISIS beheading videos appeared on YouTube, and far longer since extremists have used the website to amplify their calls for violence. It is unacceptable that YouTube has not been able to fully solve either problem despite repeated assurances. The situation is compounded by YouTube’s lack of transparency regarding which videos have been hashed and how these hashes are deployed to the site. A more proactive and transparent approach will be critical to prevent the next terrorist organization, hate group, or propagandist from also making the site a pillar of their online strategy.

Stay up to date on our latest news.

Get the latest news on extremism and counter-extremism delivered to your inbox.