(New York, N.Y.) — The Counter Extremism Project (CEP) produces a weekly report on the methods used by extremist and terrorist groups on the Internet to spread their ideologies and incite violence. Last week, CEP researchers identified around three dozen accounts on TikTok, Instagram, and Twitter/X that praised white supremacist terrorism, including the Christchurch attack.

Additionally, CEP researchers located an audio message from ISIS spokesperson Abu Hudhayfah al-Ansari on RocketChat, Telegram, and pro-ISIS websites calling for worldwide attacks on Jews and their allies across the globe as well as on Western and Arab states. In another post on RocketChat, the pro-ISIS tech group Qimam Electronic Foundation (QEF) shared a guide for inspecting suspicious URLs to avoid clicking on harmful links.

CEP researchers located a video of the founder of the neo-Nazi group The Base on a Russian video streaming platform recommending that followers prepare for war and educating them via Telegram on ways to allegedly bypass the restrictions of an AI assistant to potentially use it for criminal purposes.

Finally, on Twitter/X and Telegram, AI-generated content, antisemitic rhetoric, and conspiracy theories, including blood libel, QAnon-related posts, and others, were found in large quantities across the platforms following the arrest of Jewish worshippers accused of unlawfully constructing a tunnel in a Brooklyn synagogue.

Extreme-Right and Neo-Nazi Content, Including Content Glorifying White Supremacist Terrorists, Located on TikTok, Instagram, and Twitter/X

In a sample of content located on January 10, CEP researchers found 37 accounts on TikTok, Instagram, and Twitter/X that spread extreme-right or Neo-Nazi content or that glorified acts of terrorism committed by white supremacists.

CEP found 18 accounts on TikTok that glorified or encouraged acts of violence. Content included modified and unmodified footage from the Christchurch attack video, including clips edited into longer violent videos. Other accounts praised the white supremacist perpetrators of the 2015 Charleston church shooting and the May 2022 Buffalo attack. Two accounts posted sections of the manifestos of both the Christchurch attacker and the perpetrator of the August 2019 attack targeting Latinos at an El Paso Walmart. One account with over 1,000 subscribers specifically advocated for acts of violence against Jews. The 18 accounts had an average of 438 followers, ranging from 0 (in one instance) to an account with 1,388 followers that expressed support for the attacks in Christchurch, Halle, and Charleston.

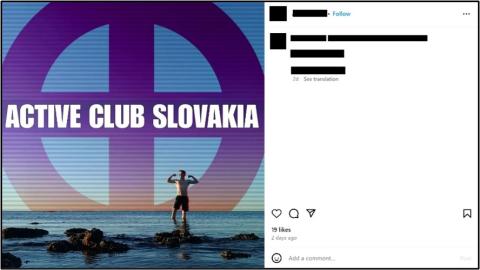

CEP located nine extreme-right accounts on Instagram. Six accounts were affiliated with white supremacist Active Clubs in Slovakia, Estonia, Ireland, Brazil, and Romania, with one account for an affiliated group in New York, New Jersey, and Pennsylvania. Other accounts located on Instagram posted antisemitic and neo-Nazi propaganda. One account with over 70 followers posted a video containing violent footage from the Christchurch and Buffalo terrorist attacks. The nine accounts averaged 261 followers, ranging between 1 and 1,597.

Lastly, CEP researchers located ten extreme-right or neo-Nazi accounts on Twitter/X. Four accounts, two created in November 2023 and two made in December 2023, were affiliated with Active Clubs in Romania, Ohio, Canada, and an area in Missouri and Illinois. Six additional Twitter/X accounts were located that posted violent footage from either the Christchurch or the Buffalo terrorist attacks. Despite the low follower counts (0 to 39) of the Twitter/X accounts that glorified or advocated for acts of terrorism, the violent videos they posted received hundreds of views in some cases. An account with only one follower posted a violent clip from the Christchurch attack in response to a tweet from a far-right British politician. The video glorified the Christchurch attacker and received over 700 views. A different account with only eight followers posted a violent video clip glorifying the Buffalo attacker and received over 550 views.

“TikTok, Instagram, and Twitter/X should significantly increase resources devoted to identifying and immediately removing content from its platforms that depict extreme violence, glorifies terrorists, and spreads extremist propaganda. Tech companies are well aware of the scope of the threat to users and the public, and they have a responsibility to uphold their content moderation policies,” said CEP researcher Joshua Fisher-Birch. “Accounts connected to Active Clubs, a known white supremacist movement recruiting around the world, and that use standardized logos and symbols, should be removed. Extremist and terrorist content is spreading on these major platforms, among others, increasing the potential for radicalization, recruitment, and real-world violence.”

CEP reported all accounts to relevant national authorities or TikTok, Instagram, or Twitter/X. CEP directly reported six accounts to TikTok. Six days later, three were removed, but accounts that remained on the platform glorified white supremacist and antisemitic attackers. Of the eight accounts reported directly to Instagram, only one was removed within the same time frame. Accounts that were not removed from Instagram included those affiliated with Active Clubs as well as profiles that posted antisemitic and anti-transgender content. All the accounts reported to Twitter, which were connected to neo-Nazi Active Clubs, were still on the platform six days later.

A post on Instagram advertising a Slovakian chapter of the Active Club movement. Screenshot taken on January 11.

ISIS Spokesperson Calls for Global Attacks

In an audio message titled “And Kill Them Wherever You Find Them” released on Telegram, RocketChat, and pro-ISIS websites on January 4, ISIS’s spokesperson Abu Hudhayfah al-Ansari called for attacks around the world on the group’s opponents.

Framing Israel’s military operations in Gaza as part of a long history of conflict between Jews and Muslims, al-Ansari stated that the fight should be religious and not fought under the banner of nationalism or non-religious ideology. He went on to condemn Hamas for their supposed democratic character and ties to Iran, whose regional ambitions were also denounced. Al-Ansari also criticized Arab rulers for failing to support the Palestinian people and allegedly allying with Israel, stating that the fight “is really a battle with the allies of the Jews more so than with the Jews themselves.”

Al-Ansari called for ISIS’s supporters to commit attacks on Jews and their allies around the globe, as well as against Western and Arab governments.

Following the release of the audio message, ISIS announced a global campaign of offensives titled “And kill them wherever you find them.” On January 9, a pro-ISIS Telegram account claimed that the terrorist group had committed 94 attacks around the world as part of the campaign in Syria, Nigeria, Congo, Cameroon, Mali, the Philippines, Mozambique, Afghanistan, and Iraq. CEP researchers counted 76 claimed ISIS attacks as part of the “And kill them wherever you find them” campaign between January 4 and January 11.

Also, on January 9, a pro-ISIS Telegram channel defended al-Ansari’s call to attack Arab Gulf states. The channel described these governments as Israel’s rear line of defense. The post also stated that several countries host U.S. military bases.

Pro-ISIS propaganda groups released posters following al-Ansari’s speech, calling for attacks on civilians in the West, including attacks on synagogues and churches.

ISIS’s Al-Furqan announcement for the “And Kill Them Wherever You Find Them” audio message by spokesperson Abu Hudhayfah al-Ansari. Screenshot taken on January 4.

Pro-ISIS Tech Group Posts Guide for Checking Potentially Suspicious URLs

On January 5, the pro-ISIS Qimam Electronic Foundation (QEF) posted a guide via RocketChat for inspecting suspicious URLs. The guide recommended checking websites via ScanURL, PhishTank, VirusTotal, and IPQualityScore’s suspicious URL scanner to avoid harmful links. Members of other pro-ISIS rooms in RocketChat have recently accused other users of posting potentially suspicious links, including alleged links to content on other websites.

Qimam Electronic Foundation logo. Screenshot taken on January 11.

Founder of The Base Recommends AI Assistant, Urges Supporters to Prepare for “War”

On January 9, the founder of the neo-Nazi group The Base, Rinaldo Nazzaro, recommended that his followers on Telegram use a specific AI assistant to receive “uncensored responses” to questions, including those “potentially criminal in nature.” Nazzaro claimed that he did not technically advocate using the AI assistant for illegal purposes but that it could be used for such. The AI assistant was designed by a company that develops commercial machine-learning products.

On January 11, Nazzaro posted a video on a Russian video streaming website telling his supporters that all their efforts regarding activism should be preparing them for violent conflict. Nazzaro stated, “War, that’s what our aim is. That’s what it needs to be, and everything we do needs to be geared towards that goal. Towards gearing up for war, in terms of actual no-shit trigger pullers that we need to recruit and the infrastructure which to support them.” Nazzaro stated that community building or propaganda activities not geared towards this goal were a waste of time. This message corresponds with some previous propaganda released by The Base and elements of the online neo-Nazi sphere that endorses violence. However, it was more direct than earlier statements from Nazzaro.

Antisemitic and White Supremacist Online Accounts Spread Disinformation and Propaganda, Including AI Generated Content, Related to Crown Heights Tunnel

Extreme right-wing and white supremacist accounts on Twitter/X and Telegram spread a large quantity of disinformation and antisemitic propaganda following the revealing of an illegal tunnel in the main synagogue of the Hasidic Chabad-Lubavitcher movement in Crown Heights, Brooklyn. The NYPD arrested nine worshippers at the synagogue on January 8 during a violent confrontation after a construction crew began to fill in the tunnel. A Chabad spokesperson stated that a radical faction had allegedly constructed the tunnel connecting the synagogue with a neighboring building.

Dozens of users on Twitter/X and Telegram posted antisemitic content in response. A great deal of propaganda accused Jews of the blood libel conspiracy, accused Jews of engaging in pedophilia, or relied on antisemitic tropes comparing Jews to rats. Numerous posts repackaged conspiracy theories tied to Pizzagate and Qanon. Telegram channels spread multiple pieces of antisemitic AI-generated content, including one image portraying Jews as rodents that was posted within hours of the tunnel receiving considerable attention online. The image received almost 7,000 views within three days.

Users of 4chan’s /pol board also posted a torrent of antisemitic content, including memes and AI-generated content.