Antisemitism Continues To Proliferate On Facebook

Facebook executives have attempted to cultivate an image for the company centered on the appearance of high standards of responsibility, transparency, and consistency, but it consistently fails in all three areas. Facebook has failed to sufficiently protect its platform with clear policies and robust, consistent enforcement. This is particularly evident in Facebook’s inability to prevent the spread of antisemitic content despite it being banned by the company for many years.

A July 2018 investigation by The Times exposed how Facebook was hosting posts that called Jews “barbaric and unsanitary,” depicted Jews as cockroaches, and linked to Holocaust denial fan pages in blatant violation of the company’s Community Standards, which regulates acceptable content and behavior on Facebook. Since then, Facebook has updated its hate speech policy and expanded it by prohibiting content that “denies or distorts the Holocaust,” or includes “anti-Semitic stereotypes about the collective power of Jews.” The measure was reactive, as is typical for the company, and antisemitic content continues to persist and proliferate on Facebook despite clearly falling within the parameters of what company policies restrict.

Sadly, this pattern is routine for Facebook. The company playbook calls for it to deny, delay, and deflect in the face of crises and criticism. Company officials hedge against attacks by citing the scope of the challenge and shifting blame onto other smaller platforms. More often than not, only limited, if any real change occurs.

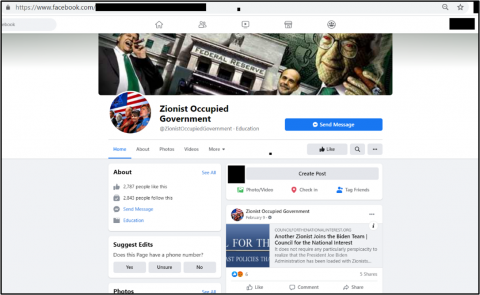

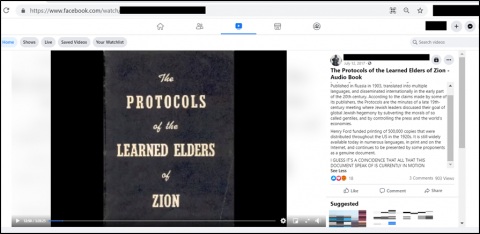

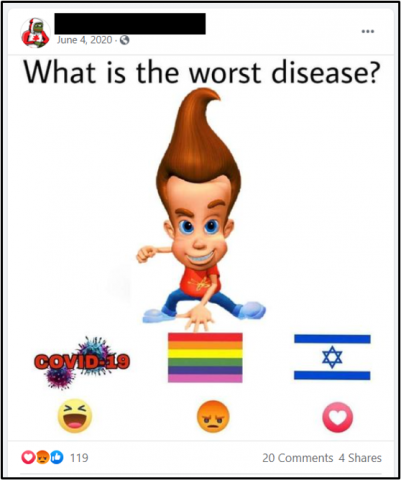

Today, Facebook hosts public groups with thousands of followers alleging that Jews secretly control the U.S. government, audiobook versions of The Protocols of the Learned Elders of Zion—which is presented as truth and contains no mention of the book’s fraudulent nature—and individual posts on meme pages indicating that Jews and Israelis are a “disease.”

Clearly, Facebook is only superficially serious about identifying violations of its Community Standards and protecting users from the kinds of antisemitic content that has too often fueled real-world violence.

Verifiably improving Facebook’s content moderation policies will require Facebook to invest the required, extensive resources to tackle the problem, which should not be a problem given its $86 billion in revenue. The spread of antisemitic content online creates real-world harms. It is used by extremists for recruitment and has been directly linked to attacks on Jewish communities. Facebook has taken a public stand and has the resources to follow through. It’s time to start asking its leadership why it is failing to do so.

Stay up to date on our latest news.

Get the latest news on extremism and counter-extremism delivered to your inbox.